Future Horizons:

10-yearhorizon

Consumer-grade glasses and adaptive user interfaces

25-yearhorizon

Individually tailored reality becomes the norm

More ambitiously, AR could unlock an entirely new technological paradigm. “Spatial computing” envisages devices that can understand and interact with their surroundings.18 Sensor-laden smart glasses that project digital content onto the user’s environment could bring the idea to life. This transition will be tightly coupled to advances in AI. Increasingly powerful computer vision will allow devices to understand their spatial context, while language and voice capabilities will provide a natural, hands-free way to interface with them.19

A crucial aspect will be innovations in user experience:20 how to present virtual content to people in ways that are both intuitive and powerful. This will require some standardisation in interfaces so that people can seamlessly switch between AR apps. Always-on AR will require careful design to ensure that users aren’t overwhelmed with information or notifications.

To achieve this, AR devices will have to become adaptive, using AI to analyse data from physiological sensors and cameras to understand the user. This will require breakthroughs in continual learning21 — so that AI can update models of the user on the fly — and activity recognition,22 so that AR devices can understand the user's context and anticipate their needs.

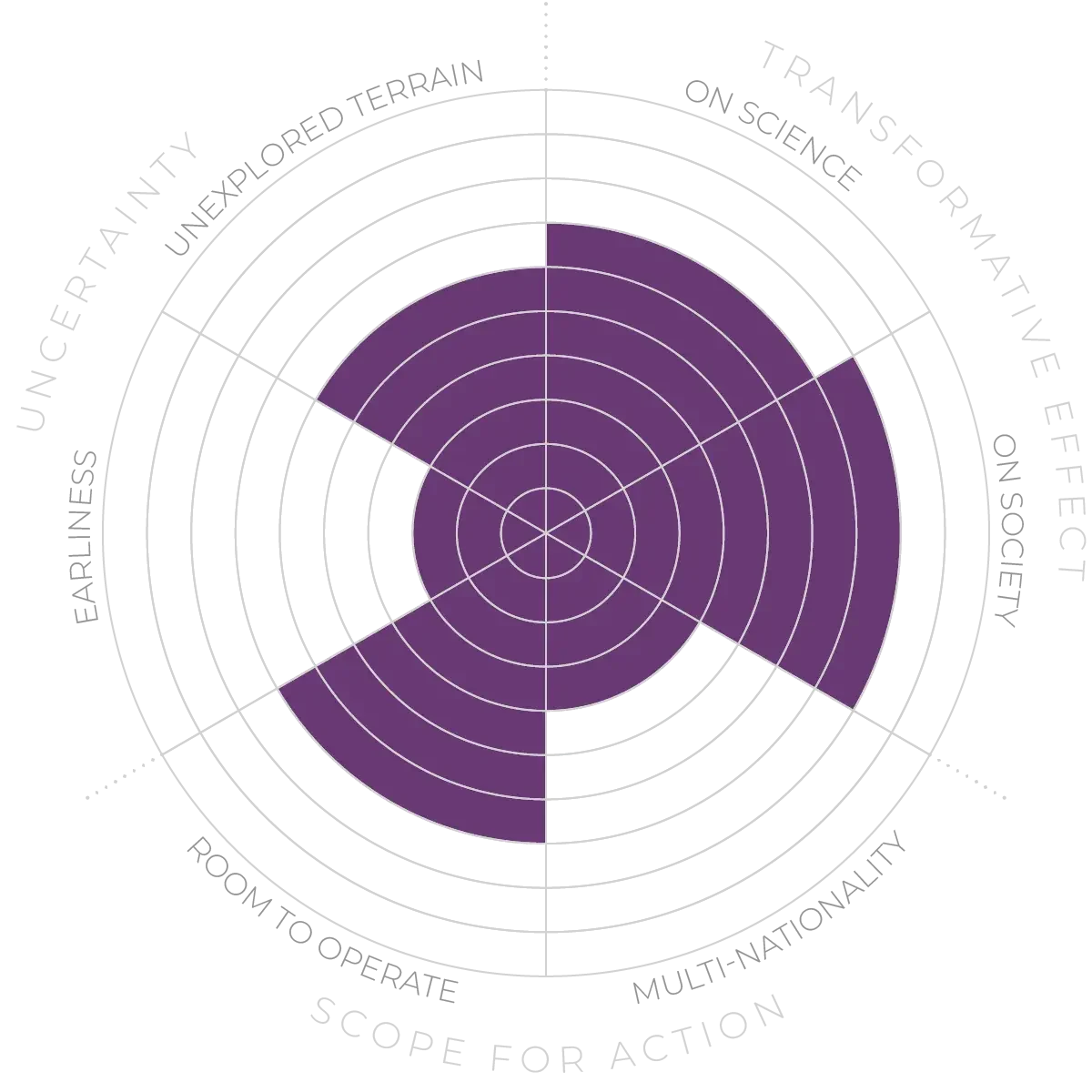

Augmented experiences - Anticipation Scores

The Anticipation Potential of a research field is determined by the capacity for impactful action in the present, considering possible future transformative breakthroughs in a field over a 25-year outlook. A field with a high Anticipation Potential, therefore, combines the potential range of future transformative possibilities engendered by a research area with a wide field of opportunities for action in the present. We asked researchers in the field to anticipate:

- The uncertainty related to future science breakthroughs in the field

- The transformative effect anticipated breakthroughs may have on research and society

- The scope for action in the present in relation to anticipated breakthroughs.

This chart represents a summary of their responses to each of these elements, which when combined, provide the Anticipation Potential for the topic. See methodology for more information.